Your Safety Net Might Be a Trap

Graveyard of Good Ideas #7: A protective filter, carefully backtested, running in production for weeks. Turns out it was protecting us from our own profits.

We had a filter — nothing fancy, just a simple threshold on a market condition metric that told the system to sit on its hands when the reading was too high. The idea was to keep us out of overextended, risky moves.

It ran for weeks and everything looked normal, until one week the system produced zero trades across the board. Not a slow week — literally nothing.

The natural instinct was to shrug it off. "Market's been weird." "Filter's just being conservative." "We don't have enough N yet to draw conclusions, let's wait."

That last excuse almost killed us.

"Let's Wait for More Data"

You know what "let's wait for more data" sounds like? Discipline. The wise, measured approach of a serious quantitative researcher who doesn't jump to conclusions.

In reality, it's the trading equivalent of a student saying "I'll study when I have better notes." The notes never get better, the studying never happens, and the exam comes whether you're ready or not.

Every day the system didn't trade, we were bleeding money in the most invisible way possible — missed opportunities that never show up on any dashboard or P&L report. All our monitors were green, all health checks passed, and the system was working perfectly. Just perfectly wrong.

We stopped waiting and started asking the only question that matters: why didn't it trade?

"Is the system working?" turned out to be the wrong question entirely, because a system can be technically flawless while doing the exact opposite of what you intended — every line of code executing correctly, every condition evaluated properly, every threshold applied exactly as specified. Our problem wasn't execution. It was the logic itself.

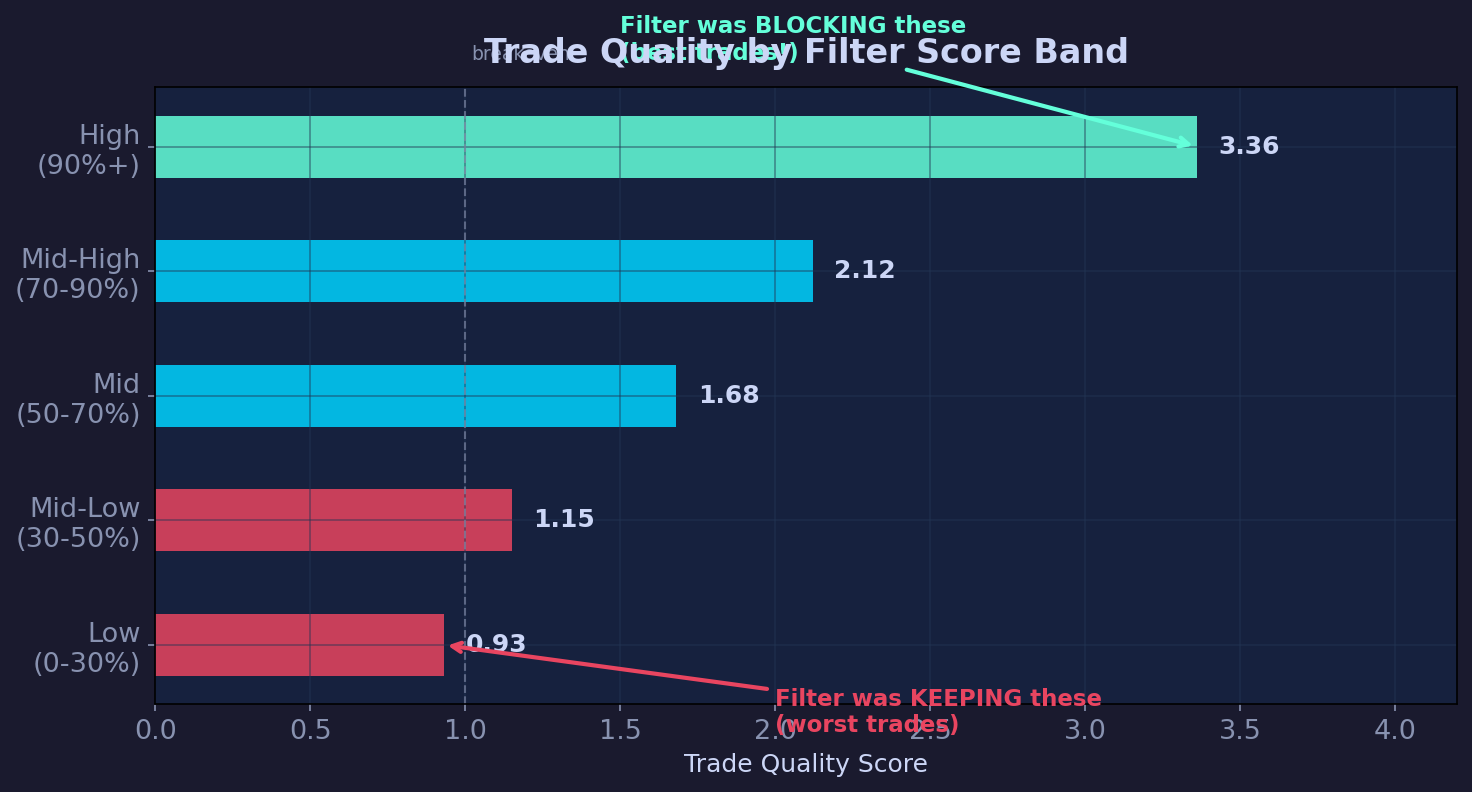

So we pulled the data, grouped every historical trade by the metric the filter was checking, sliced them into bands from low to high, and looked at which group actually made money.

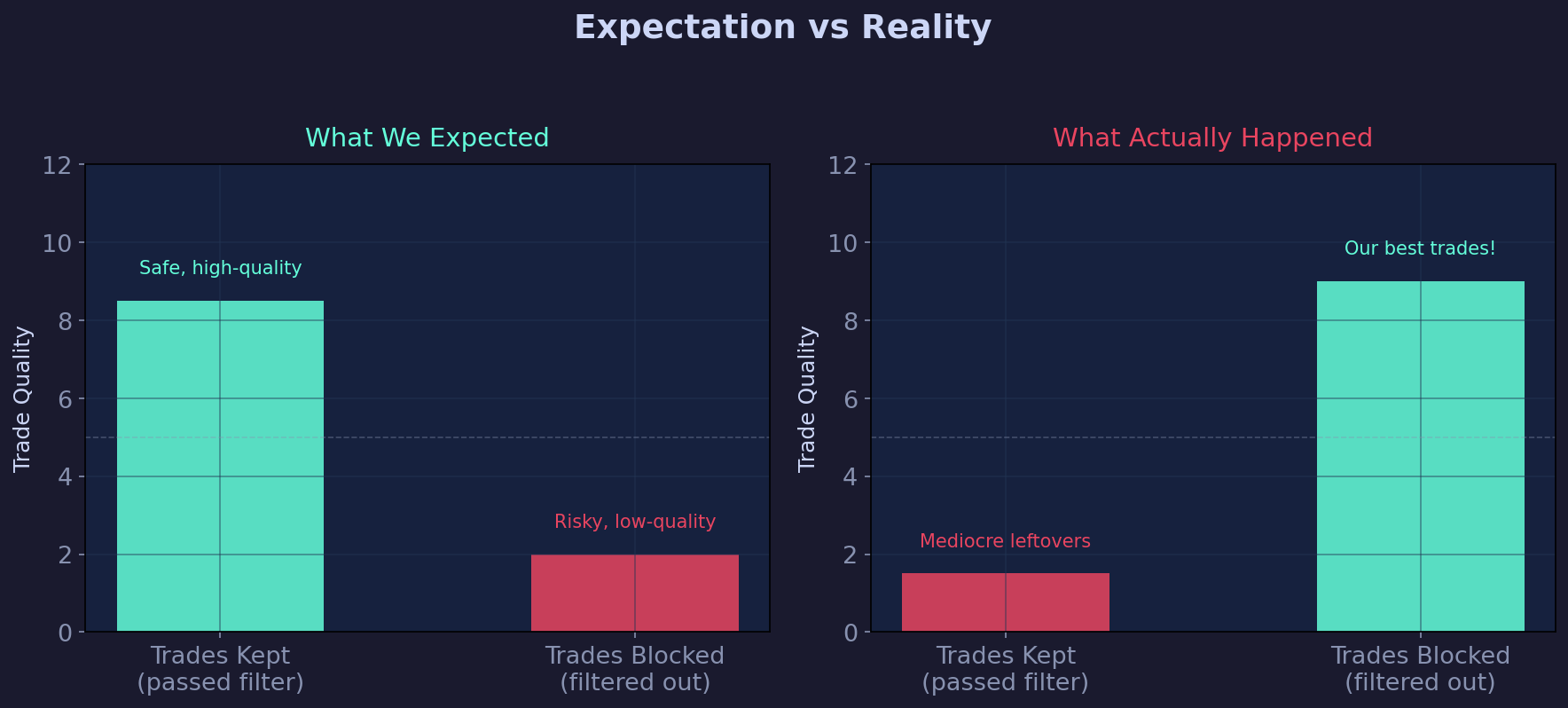

The filter was completely, catastrophically backwards.

The trades it had been blocking — the high-score ones, the ones it was "protecting" us from — turned out to be the best trades in the entire system, while the ones it was keeping through were mediocre at best and losing at worst.

The bottom band scored 0.93, below breakeven. The top band it was blocking? 3.36. More than three times the quality, all of it filtered out "for safety."

How Does This Even Happen?

Pretty easily, it turns out. We built the filter, validated it, deployed it, then months later changed a different part of the system without re-testing the filter. That change shifted which market conditions were actually profitable, but the filter was still operating on old assumptions — blocking trades that used to be risky but had since become the best opportunities under the new logic.

This is the part that stings: we have the whole validation toolkit, and we use it. We just used it once and then stopped, assuming the components would stay correct while we changed everything around them.

The Fix

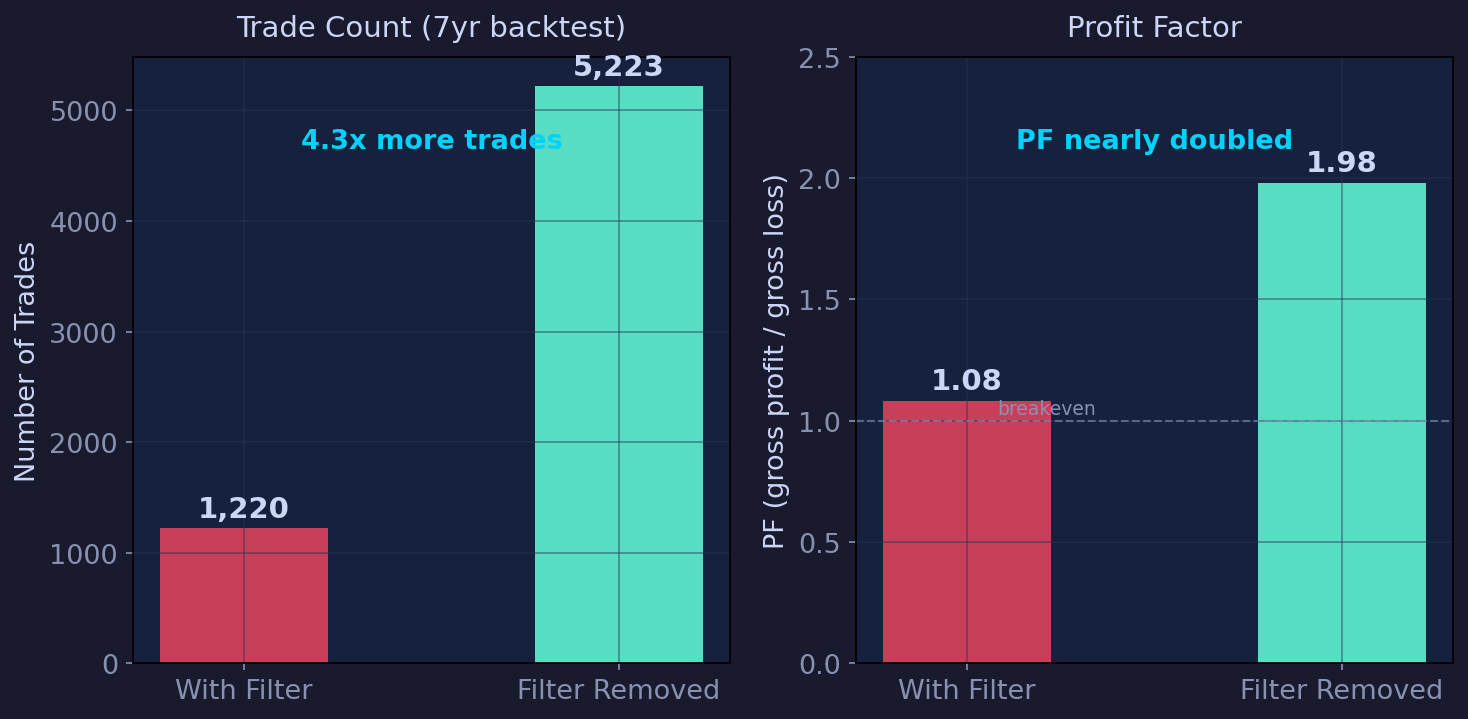

We removed the filter entirely and just deleted the condition.

Trade count jumped 4.3x, profit factor nearly doubled, and every single validation fold came back positive. We ran five separate verification tests and not a single one favored keeping the filter. It wasn't a safety net. It was a cage.

The specific habit we adopted after this: every day, even when nothing trades, we ask what prevented entries and does that make sense given what the market actually did? If the market moved 2% and our system stayed flat, that's not caution — it's a broken assumption hiding behind a green dashboard.

Data doesn't age into insight on its own. You have to poke it, ask it uncomfortable questions, and when something smells off — a week of no trades, a metric that seems too stable, a filter that never fires — dig in immediately. We lost weeks of opportunity because we mistook laziness for patience, and checking is always cheaper than waiting.

This is not investment advice. Past results don't guarantee future performance. All analysis reflects our internal research process only.